No Backtracking: A hill-climbing algorithm only works on the current state and succeeding states (future).The greedy approach enables the algorithm to establish local maxima or minima. It employs a greedy approach: This means that it moves in a direction in which the cost function is optimized.Features of a hill climbing algorithmĪ hill-climbing algorithm has four main features:

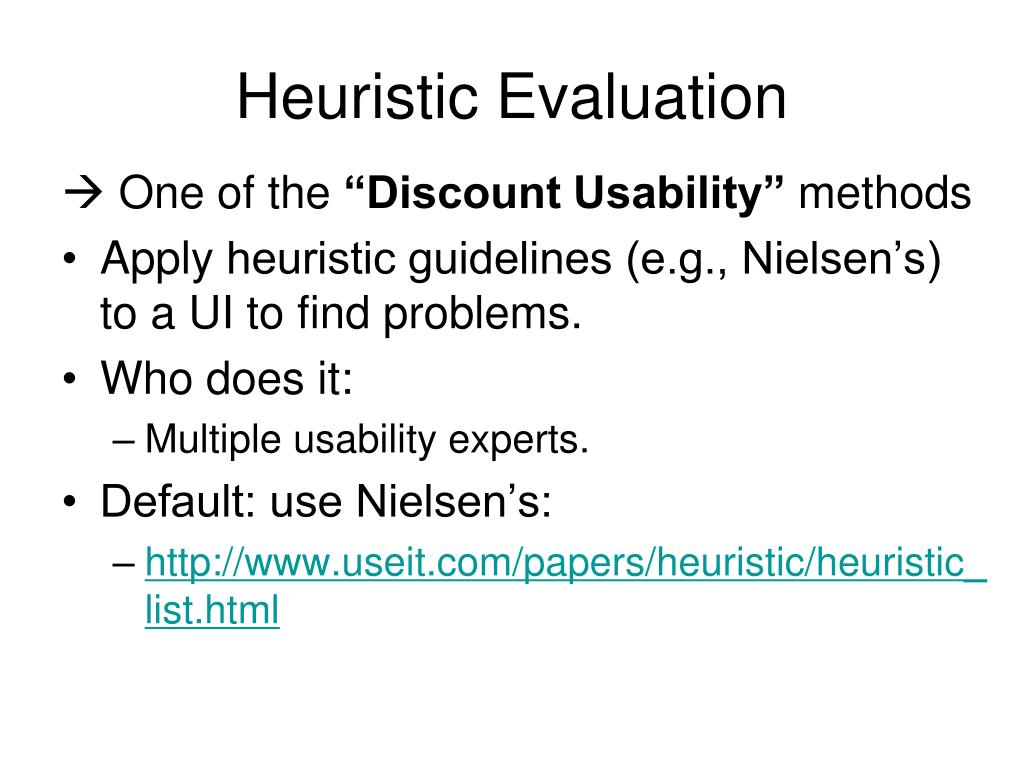

The peak state cannot undergo further improvements. This process will continue until a peak solution is achieved. When the current state is improved, the algorithm will perform further incremental changes to the improved state. This explains why the algorithm is termed as a hill-climbing algorithm.Ī hill-climbing algorithm’s objective is to attain an optimal state that is an upgrade of the existing state. The process of continuous improvement of the current state of iteration can be termed as climbing. The heuristic function is used as the basis for this precondition. It begins with a non-optimal state (the hill’s base) and upgrades this state until a certain precondition is met. This algorithm has a node that comprises two parts: state and value. This algorithm comes to an end when the peak is reached. Introduction to hill climbing algorithmĪ hill-climbing algorithm is a local search algorithm that moves continuously upward (increasing) until the best solution is attained. The article will also highlight the applications of this algorithm. It discusses various aspects such as features, problems, types, and algorithm steps of the hill-climbing algorithm. This article will improve our understanding of hill climbing in artificial intelligence. This algorithm is used to optimize mathematical problems and in other real-life applications like marketing and job scheduling. This thesis, or any portion thereof, may not otherwise be copied or reproduced without the written consent of the copyright owner, except to the extent permitted by Canadian copyright law.A hill-climbing algorithm is an Artificial Intelligence (AI) algorithm that increases in value continuously until it achieves a peak solution. License This thesis is made available by the University of Alberta Libraries with permission of the copyright owner solely for non-commercial purposes.Than the total time needed for the bootstrap process while the solutions obtained are still very close to optimal. The experimental results of this method on large search spaces demonstrate that the single instance of large problems are solved substantially faster Up to the point when the instance is solved in one sub-thread. The total time by which we evaluate this process is the sum of the times used by both threads Which uses the new heuristic to try to solve the instance. When a new heuristic is learned in the learning thread, an additional solving sub-thread is started The first solving sub-thread aims at solving the instance using the initial heuristic. The solving thread is split up into sub-threads. We alternate between the execution of two threads, namely the learning thread (to learn better heuristics) and the solving thread (to solve the test instance). To make the process efficient when only a single test instance needs to be solved, we lookįor a balance in the time spent on learning better heuristics and the time needed to solve the test instance using the current set of learned heuristics. The total time for the bootstrap process to create strong heuristics for large problems is several days. Heuristics that allow IDA* to solve randomly generated problem instances quickly with solutions very close to optimal. Until a sufficiently strong heuristic is produced.Ģ4-sliding tile puzzles, the 17-, 24-, and 35-pancake puzzles, Rubik's Cube, and the 15- and 20-blocks world. To generate a sequence of heuristics from a given weak heuristic We investigate the use of machine learning to create effective Author / Creator Jabbari Arfaee, Shahab.Bootstrap Learning of Heuristic Functions

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed